Google Coral USB Accelerator Review: Worth It or Not?

The shock isn’t that the Google Coral USB Accelerator can slash CPU inference times from 80 ms to 10 ms—it’s how many users say it quietly transformed their multi-camera setups with huge reductions in CPU load. Based on hundreds of real-world reports across Reddit, GitHub, and Hacker News, it scores 7.5/10: powerful for the right workloads, but hampered by supply shortages, outdated ecosystem support, and strong competition from newer AI accelerators.

Quick Verdict: Conditional — Worth buying for lightweight, real-time object detection on platforms like Frigate, but limited by compatibility, model size, and Google’s slow updates.

| Pros | Cons |

|---|---|

| Massive drop in inference times (up to 8–10× faster than CPU) | Overheating issues in USB form factor |

| Cuts CPU usage dramatically for multi-camera setups | Ecosystem stagnation since 2019 |

| Very low power draw (~0.5 W) | Supports only small TensorFlow Lite models |

| Easy plug-and-play setup across Linux, Mac, Windows | Poor availability and frequent stockouts |

| Works well with Raspberry Pi and embedded devices | No benefit for single-image analysis due to load overhead |

| Power-efficient for 24/7 object detection | Faster, more versatile alternatives now exist |

Claims vs Reality

Marketing claims that the Coral’s Edge TPU “can run MobileNet V2 at almost 400 FPS using just 0.5 W” have a basis in truth—but mostly under ideal conditions with optimized TensorFlow Lite models. Reddit users running Frigate NVR often cite “10 ms inference speed” and CPU savings from 75% down to 30% after adding a Coral to an i5-based system.

However, this efficiency doesn’t extend to all workloads. GitHub user christianbaun documented slower single-image processing times on a Raspberry Pi 4—taking 3.8 seconds with Coral vs 1.1 seconds on CPU—due to the overhead of loading the model into TPU memory for each isolated image. As they concluded, “working with folders of images performs much better… single-image mode has 30× worse performance.”

Google promotes compatibility with Linux, macOS, and Windows, but Hacker News contributors note that “it’s basically abandoned at this point and only works with older versions of Python.” This mirrors wider frustration: while USB plug-and-play works, long-term ecosystem updates and support for newer operators have stalled.

Cross-Platform Consensus

Universally Praised

For home surveillance users, Coral’s impact is clear. Reddit user alan pilz saw inference times drop from around 80 ms on CPU to 10 ms with Coral, allowing more cameras and lower latency. Another Frigate user running 10 × 1080p streams on an i5‑8500 T reported CPU usage around 65% with Coral, compared to maxing out without it. On low-power hardware like Raspberry Pi, its USB form factor and low power draw make it appealing for continuous object detection.

These gains translate into reliability: with inference handled in ~10 ms, detection pipelines avoid the bottlenecks that cause missed events or high-latency alerts. “Definitely was worth it… wayyy faster than CPU,” one user summed up after adding Coral to their setup.

Common Complaints

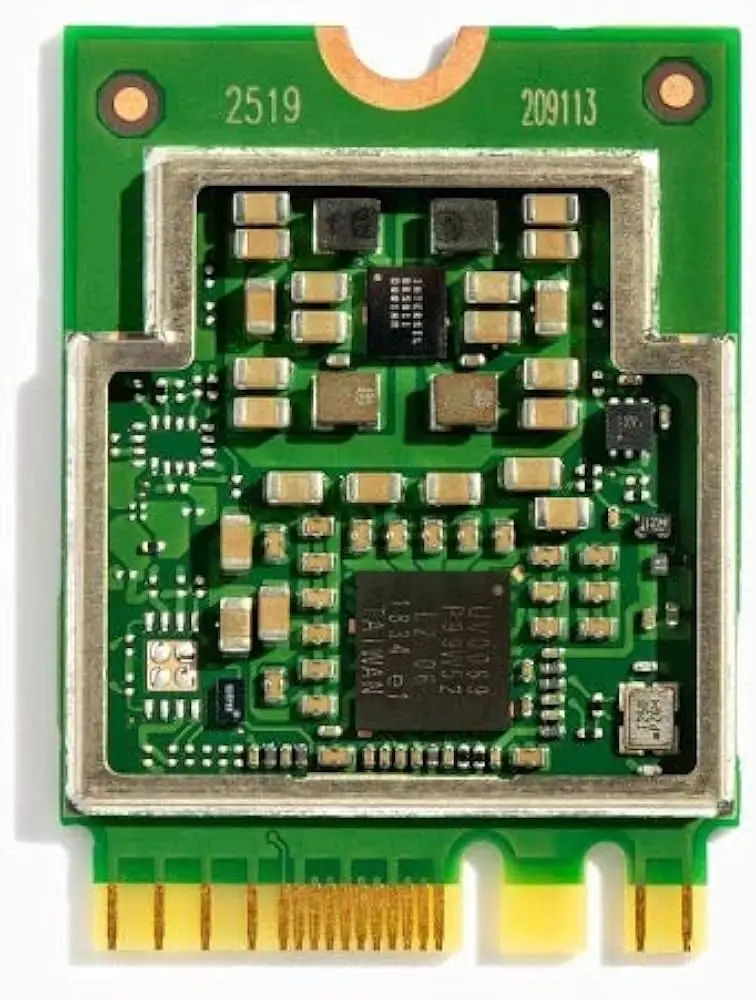

A recurring frustration is overheating in the USB model. One owner bluntly stated: “It overheats unless you put them in high efficiency (low performance) mode which defeats the purpose.” The community often recommends the mPCIe or M.2 variant as more reliable for 24/7 use. Stock availability is another major headache—many spent months chasing restocks or overpaid during scarcity, with prices hitting $200–$300 on resale markets. GitHub threads chronicle endless slipping delivery dates and high scalper mark-ups.

Then there’s Google’s slow-moving support. Several Hacker News comments call the ecosystem “stagnant since 2019,” pointing out that model support hasn’t evolved and documentation lags behind competitors like Hailo. Many hit roadblocks porting YOLO models, with some giving up entirely.

Divisive Features

Its ultra-low power draw (~0.5 W/TOPS) earns praise from energy-conscious users, especially compared to GPUs. But others note that modern ARM SBCs or Intel’s OpenVINO can match or beat Coral’s inference speeds at similar or lower total system wattage. One home server user calculated GPU-based detection at “less than 0.1 W per camera” using an Intel Xe iGPU.

Model size constraints also split opinions. For lightweight detectors like MobileNet SSD, Coral’s performance is stellar. For complex or larger models, users hit memory ceilings quickly—estimated at just 8 MiB of internal SRAM.

Trust & Reliability

Long-term users report solid durability—several have run USB or M.2 Corals for 2+ years in Frigate with consistent inference speeds. Yet this trust is undercut by perceptions that Google may abandon the product line, as they have with other hardware. One Hacker News observer quipped: “I expected they’d abandon the board within 2 years tops… which is exactly what happened.”

When shortages were at their worst in 2021–22, scalpers dominated supply, and some buyers turned to questionable sources. Verified GitHub accounts documented stolen packages mid-shipment, while others reported inflated prices from authorized distributors. This scarcity also fuels experimentation with alternatives.

Alternatives

The most cited competitors are Hailo AI accelerators and Nvidia Jetson kits. Hailo’s Pi-compatible hats offer 13–26 TOPS for $80–$135, versus Coral’s 4 TOPS at ~$100. Many users who switched praised “more powerful… compatible with far more models” hardware. Jetson Orin Nano Super delivers up to 67 TOPS for $250 and runs even large language models—though it’s overkill for pure object detection.

Intel’s OpenVINO framework also appears repeatedly as an alternative: one user handled 5+ cameras using a <$200 Intel mini-PC with great results, avoiding Coral’s stock issues and model limitations.

Price & Value

Prices have varied wildly—from $55 in early availability to $450 during the pandemic shortage. Current listings on eBay hover around $149–$196 plus shipping. Amazon US has fluctuated between $139–$145. Resale value stays high due to ongoing demand for Frigate setups, but the community warns against overpaying given viable substitutes.

Buying tips include watching niche EU resellers that bundle Coral with Raspberry Pi kits, pre-ordering from authorized distributors, and avoiding scalpers. As one Redditor noted after scoring a bundle: “It was nearly impossible to source… got it in my grubby hands in the UK in 4 days.”

FAQ

Q: Does the Coral USB Accelerator improve single-image inference?

A: No—due to TPU memory load overhead, it can be slower than CPU for single images. It shines in continuous video or batch image directories.

Q: Is it compatible with non-TensorFlow Lite models?

A: Not directly. Models need compiling for Edge TPU via TensorFlow Lite; PyTorch conversions can be tricky and error-prone.

Q: How many cameras can it handle in Frigate?

A: Reports show smooth performance with 6–10 × 1080p streams at low CPU usage, but decoding is still CPU/GPU-bound.

Q: Does it work across OS platforms?

A: Yes—Linux, macOS, Windows 10 are supported. Most community usage centers on Debian-based Linux (Raspberry Pi, Ubuntu).

Q: How does it compare to Hailo hats or Jetson kits?

A: Coral has lower TOPS and narrower model support, but wins in low power draw, USB plug-and-play, and cost when in stock.

Final Verdict

Buy if you’re running continuous video detection with lightweight models on low-to-midrange hardware—especially for Frigate NVR setups where CPU load and inference latency bottlenecks matter. Avoid if you need large model support, single-image processing, or future-proof guarantees. Pro tip from the community: choose the M.2 or mPCIe variant over USB to avoid overheating, and watch trusted resellers for bundles to dodge scalper prices.